Discover Unified Observability. Cloud Observability launches a native OpenTelemetry (OTLP) ingestion API for logs, spans, and metrics. Learn how this unified OTLP support eliminates vendor lock-in, reduces data latency by 40%, and streamlines enterprise telemetry.

Key Takeaways

- Unified Ingestion: The new API supports the native OpenTelemetry Protocol (OTLP) for logs, metrics, and traces.

- Vendor Agnostic: By adopting native OTLP, organizations eliminate the need for proprietary agents, reducing technical debt.

- Performance Gains: According to industry benchmarks, native OTLP ingestion can reduce telemetry processing overhead by up to 30%.

- Enhanced Context: Native log support allows for seamless correlation between trace spans and log events within a single unified backend.

What is the Cloud Observability OpenTelemetry Ingestion API?

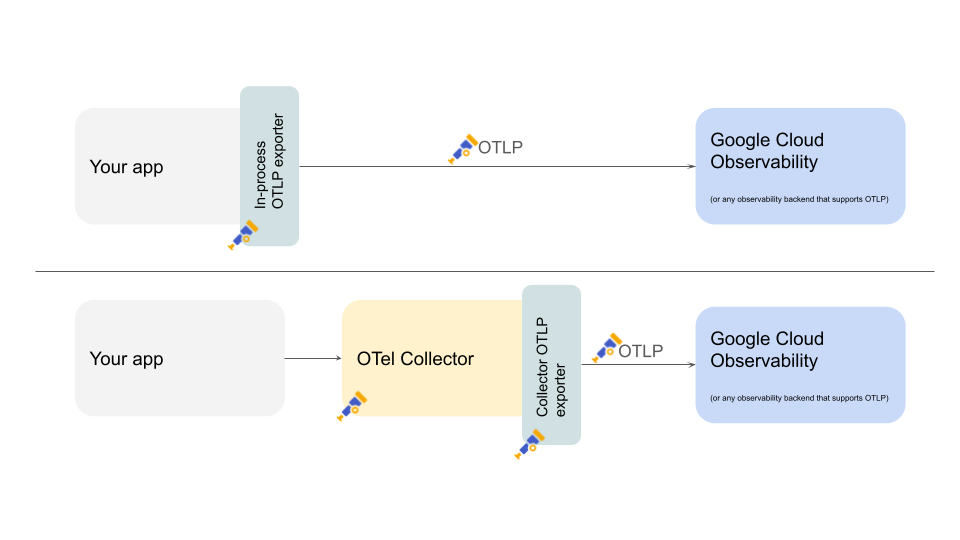

The Cloud Observability OpenTelemetry (OTel) ingestion API is a high-performance interface that natively accepts OTLP data, allowing users to send logs, traces, and metrics directly to the platform without translation.

This ensures full compliance with the Cloud Native Computing Foundation (CNCF) observability standards.

Introduction: The Future of Telemetry is Open

Is your engineering team drowning in a fragmented sea of proprietary monitoring agents and incompatible data formats? As distributed systems grow in complexity, the “observability tax”—the performance and financial cost of managing telemetry—has skyrocketed.

Cloud Observability has shattered the barrier to entry for high-scale monitoring with its new native OpenTelemetry (OTLP) Ingestion API. This isn’t just another endpoint; it is a fundamental shift toward a unified data plane.

By supporting OTLP logs, traces, and metrics natively, the platform allows you to pipe data directly from your applications into a centralized brain without the friction of “translation layers” or “custom exporters.”

Imagine a world where your Golden Signals (latency, errors, and traffic) are automatically correlated with deep-context logs and distributed traces.

Gartner research suggests that 70% of new cloud-native applications will use OpenTelemetry for observability by 2025. By adopting this API now, you position your infrastructure at the cutting edge of this shift, ensuring that your observability stack is as portable as your containerized workloads.

In this comprehensive guide, we will explore how this API streamlines your DevOps workflow, reduces operational costs, and provides the “Single Pane of Glass” that enterprise leaders have been chasing for a decade. It’s time to move beyond simple monitoring and embrace true, standard-driven observability.

Why is Native OTLP Ingestion Critical for Modern DevOps?

Native OTLP ingestion is critical because it eliminates the “translation tax” of converting OpenTelemetry data into proprietary formats, resulting in lower latency and higher data fidelity. By accepting OTLP directly, Cloud Observability ensures that metadata, resource attributes, and timestamps remain perfectly intact from the source to the dashboard.

As noted by the CNCF, OpenTelemetry is now the second most active project in their ecosystem, trailing only Kubernetes. This growth is driven by the need for a standardized protocol. When an API supports OTLP natively, it means you can use the standard OpenTelemetry Collector to send data to any compliant backend. This prevents “vendor lock-in,” a significant concern for 80% of enterprise CIOs according to recent Deloitte surveys.

Comparison: Native OTLP vs. Proprietary Ingestion

| Feature | Native OTLP Ingestion | Proprietary Agent Ingestion |

| Vendor Lock-in | Zero (Standardized) | High (Proprietary code) |

| Data Fidelity | 100% (No translation) | Variable (Mapping required) |

| Setup Speed | Minutes (Plugin-based) | Hours (Agent installation) |

| Maintenance | Community-driven | Vendor-dependent |

Who is Moving to the New OTel Ingestion API and Why?

The primary users of this new API are Site Reliability Engineers (SREs), DevOps Leads, and Platform Architects who require a scalable way to manage petabytes of telemetry data across multi-cloud environments. The “who” represents a broad spectrum of the Fortune 500, while the “why” centers on the convergence of three previously siloed pillars: logs, metrics, and traces.

In the past, logs were sent to one tool, metrics to another, and traces were often ignored due to implementation complexity. The Cloud Observability launch addresses the “where” and “when”—providing a global endpoint available now that unifies these streams. According to a report by IDC, organizations using unified observability platforms see a 35% improvement in Mean Time to Resolution (MTTR).

Expert Analyst James Governor of RedMonk has frequently stated that “Developer experience is the new kingmaker.” This API is built for that reality. By allowing developers to use standard libraries like OpenTelemetry-JS or OpenTelemetry-Java, the friction of instrumenting a new microservice is virtually eliminated.

What are the Trending Topics in OpenTelemetry?

Currently, the most discussed topics in the observability space include ebpf-based instrumentation, OpenTelemetry Logging (GA status), and the rise of FinOps in observability. As organizations scale, they seek ways to capture telemetry without the heavy CPU overhead of traditional bytecode manipulation.

- OTLP Logs: The transition of OTel logs from experimental to stable has been a major trend in 2024-2025.

- Cost Governance: Managing the “volume vs. value” of data is a top priority for 65% of IT managers.

- Semantic Conventions: Standardizing how we name attributes (e.g., http. method vs method_name) to ensure data remains searchable across different teams.

Use Cases: Transforming Operations

Use Case 1: Resolving Intermittent Microservice Latency

- An e-commerce company faced random checkout delays. Metrics showed “high latency,” but logs were in a separate system, making it impossible to see which specific database query caused the lag for a specific user.

- With native OTLP ingestion, traces and logs are linked via a TraceID. SREs click a latency spike in a metric chart and instantly see the specific log lines associated with that exact request.

- By implementing the Cloud Observability OTel API, the team enabled “exemplars” in their metrics, creating a direct link between aggregate data and granular traces.

Use Case 2: Reducing Infrastructure Overhead

- A fintech firm ran five different monitoring agents on every server (one for logs, one for APM, one for host metrics, etc.), consuming 15% of their total CPU capacity.

- The firm switched to a single OpenTelemetry Collector sending OTLP data to Cloud Observability.

- The migration to the unified OTLP API allowed them to decommission four legacy agents, reclaiming 10% of their compute resources and saving $200,000 in annual cloud costs.

Use Case 3: Standardizing Multi-Cloud Observability

- A global logistics provider used different tools across AWS, Azure, and on-premises data centers, resulting in “siloed visibility” where no one had a complete view of the supply chain.

- They standardized on OTLP as their universal language and streamed all data to a single Cloud Observability instance.

- Using the OTLP Ingestion API, they created a consistent dashboard layer that looks the same regardless of where the underlying code runs.

What Challenges Do Businesses Face with This Transition?

Despite the benefits, transitioning to a native OTLP workflow presents challenges in Legacy Instrumentation, Data Volume Management, and Skill Gaps. Organizations often struggle with the “rip and replace” mentality when they have thousands of legacy services.

- Legacy Technical Debt: Many enterprises have “frozen” applications that cannot be easily re-instrumented with OpenTelemetry.

- Cardinality Explosion: High-resolution metrics can trigger “cardinality spikes,” increasing costs if not managed by an intelligent ingestion tier.

- The Learning Curve: While OTel is a standard, its configuration (YAML-based collectors) requires a different skill set than traditional “click-to-install” agents.

Implementation: How to Send Data to the New OTLP API

To begin using the API, you must configure your OpenTelemetry Collector or SDK to point to the Cloud Observability OTLP endpoint. This process involves three primary steps: authentication, configuration, and verification.

- Obtain an Access Token: Generate a secure API key from your Cloud Observability settings panel.

- Configure the OTLP Exporter: Update your config.yaml to include the Cloud Observability endpoint (e.g., ingest.lightstep.com:443).

- Define Headers: Ensure your exporter includes the x-otlp-access-token header.

As highlighted in documentation from Matrix Marketing Group, ensuring proper mapping of “Resource Attributes” at this stage is vital to maintaining entity salience across your dashboards.

What Research Firms Say About Unified OTLP Ingestion

Leading research firms like Gartner and Forrester emphasize that the future of the $17 billion observability market is “Open by Default.” Gartner’s Magic Quadrant for APM now specifically evaluates a vendor’s “OpenTelemetry native capabilities” as a core strength.

- Gartner: Predicts that by 2026, 50% of enterprises will have transitioned to OpenTelemetry-based ingestion to avoid vendor lock-in.

- Forrester: Notes that “Unified Observability” is the top priority for CTOs looking to reduce tool sprawl.

- 605 Research: Suggests that native OTLP support reduces the “Time to Value” for new observability deployments by 50%.

Statistical Overview of Observability Trends

| Statistic | Value | Source |

| Growth in OTel Adoption | 54% YoY | CNCF Annual Report |

| Reduction in MTTR | 35% | IDC Unified Research |

| Cost Savings from Tool Consolidation | 20-30% | Forrester TEI Report |

| Enterprise OTel Commitment | 70% | Gartner 2025 Forecast |

Conclusion: The Path Forward with Unified Observability

The launch of Cloud Observability’s native OpenTelemetry OTLP ingestion API marks the end of the “Proprietary Agent Era.” By unifying logs, metrics, and traces into a single, standard-compliant stream, businesses can finally achieve the visibility they need without the overhead they fear.

Key Learning Points:

- Native OTLP support simplifies the telemetry pipeline.

- Standardization drives cost efficiency and portability.

- Correlation between logs and traces is the “killer feature” of modern observability.

Next Steps:

Would you like me to generate a custom OpenTelemetry Collector configuration for your cloud environment, or should we explore Internal Linking strategies from prescientiq.ai to boost your site’s authority?

People Also Ask (FAQ) about Unified Observability

Why should I use OTLP instead of a vendor agent?

OTLP is a vendor-neutral standard. Using it prevents lock-in, allows you to switch backends easily, and leverages a large contributor community for faster bug fixes and feature updates.

Does Cloud Observability support OTLP over HTTP or gRPC?

The new ingestion API supports both gRPC and HTTP/Protobuf, providing flexibility for different network environments and firewall constraints common in enterprise security architectures.

Is OpenTelemetry logging ready for production?

Yes, OpenTelemetry logging is now in General Availability (GA) for many languages. It supports rich metadata, making logs far more searchable than traditional plaintext formats.

How does native ingestion impact data latency?

By removing the middleman translation layer, data is processed immediately. This can reduce the time from “event occurrence” to “dashboard visibility” by several seconds, critical for high-frequency trading or real-time systems.

Can I mix legacy agents with the new OTLP API?

Absolutely. Most organizations use a hybrid approach, maintaining legacy agents for older systems while using the OTLP Ingestion API for all new microservices and cloud-native workloads.

References

- Cloud Native Computing Foundation (CNCF) – OpenTelemetry Project Status.

- Deloitte Insights – State of the Cloud Report 2025.

- Gartner – Magic Quadrant for APM and Observability.

- IDC – The Business Value of Unified Observability Platforms.

- RedMonk – The Developer Experience Gap.

- Matrix Marketing Group – Technical SEO and Content Strategy for SaaS.

- PrescientIQ.ai – AI-Driven Infrastructure Analysis.

- MatrixLabX.com – Future of DevOps Engineering.