Discover how Context-as-a-Service (CaaS) is transforming AI from simple pattern matching to deep situational awareness. Learn how CaaS provides the real-time data, user intent, and environmental logic required for hyper-personalized generative AI experiences.

Key Takeaways

- Context-as-a-Service (CaaS) is a cloud-based delivery model that provides real-time, situational data to AI models to improve accuracy and relevance.

- It acts as the “connective tissue” between static Large Language Models (LLMs) and dynamic enterprise data.

- CaaS reduces AI Hallucinations by grounding responses in verified, real-world facts and user-specific metadata.

- Implementation often involves Retrieval-Augmented Generation (RAG) and Vector Databases to manage high-dimensional data.

What is the definition of Context-as-a-Service (CaaS)?

Context-as-a-Service (CaaS) is a specialized cloud model that streams real-time, situational, and behavioral data into AI applications. By providing immediate “awareness” of a user’s environment, history, and intent, CaaS transforms static AI responses into highly accurate, personalized, and actionable intelligence for modern enterprises.

Introduction: The Missing Link in Artificial Intelligence

In a world where every business is racing to integrate Generative AI, most are finding that their expensive models are surprisingly “blind.” They possess vast general knowledge but lack the specific, real-time context needed to make a meaningful impact on your unique business goals.

Imagine an AI that doesn’t just know how to write code, but knows your specific legacy infrastructure, your team’s coding standards, and the current state of your production environment.

This level of “situational awareness” is exactly what Context-as-a-Service (CaaS) provides, moving beyond simple prompts to a continuous stream of relevant intelligence.

By implementing CaaS, you move from “Ask and Hope” to “Know and Execute.”

This architecture allows your AI to understand the why behind a query, reducing errors and increasing the value of every automated interaction by up to 40 percent, according to recent industry benchmarks on RAG-enhanced systems.

It is time to stop treating AI as a standalone chatbot and start treating it as a context-aware partner.

Explore the layers of CaaS below to understand how this framework can bridge the gap between generic machine learning and true enterprise intelligence.

The Five Ws of CaaS: Who, What, Where, When, and Why?

Who is driving the CaaS revolution?

The primary drivers of Context-as-a-Service are enterprise software architects and data scientists who realized that Foundation Models like GPT-5 are only as good as the data they can access at the moment of inference.

Major cloud providers and specialized startups are now building the infrastructure to serve this context as a scalable API.

What exactly is being served?

The “Context” in CaaS refers to a combination of structured data (customer records, inventory levels), unstructured data (PDFs, chat logs), and ephemeral data (current location, device state, or even the user’s recent emotional sentiment). It is the “surrounding information” that makes a prompt meaningful.

Where does this context live?

CaaS typically resides in a specialized layer between the user interface and the LLM.

It utilizes Vector Databases and Knowledge Graphs to store and retrieve information with sub-second latency, ensuring the AI has the facts it needs exactly when a query is processed.

When should a business implement CaaS?

Implementation is critical when “hallucinations” (AI-generated falsehoods) become a business risk or when users demand deep personalization.

As AI moves from a “toy” to a “tool,” the need for grounded, verifiable context becomes the baseline requirement for any production-grade deployment.

Why is CaaS superior to traditional SEO or data fetching?

Standard data fetching provides raw values, but CaaS provides Semantic Meaning.

It uses embedding models to understand the relationships among data points, enabling the AI to synthesize a response that feels human-centric rather than robotic. This shift is essential for maintaining a competitive edge in an increasingly automated marketplace.

How does CaaS solve the “Stale Data” problem?

CaaS mitigates data latency by using dynamic pipelines that update the AI’s “working memory” without retraining the underlying model.

Because retraining a model is expensive and time-consuming, CaaS offers a cost-effective way to keep AI up to date with up-to-the-minute information.

“The winner of the AI race won’t be who has the biggest model, but who has the best access to the user’s current reality.” — Sarah Chen, Data Strategy Lead.

Comparing Traditional AI vs. CaaS-Enabled AI

| Feature | Traditional AI (LLM Only) | CaaS-Enabled AI |

| Knowledge Cutoff | Limited to the training date | Real-time / Dynamic |

| Accuracy | Prone to hallucinations | Grounded in facts |

| Personalization | Generic | Hyper-personalized |

| Data Privacy | Hard to control | Role-based access context |

| Cost | High (Retraining needed) | Low (Contextual retrieval) |

A Human-Centric Story: The Retail Transformation

Sarah, a Senior Architect at a global retail chain, faced a massive problem: her company’s AI customer service agent was recommending winter coats to customers in Florida during a heatwave.

The AI had “general knowledge” of seasons but no “contextual awareness” of the user’s local weather, current inventory at the nearest store, or the user’s specific purchase history. This led to a 15% drop in customer satisfaction scores and thousands of useless interactions.

Sarah implemented a Context-as-a-Service layer. She connected the AI to a real-time weather API, the company’s ERP system, and a vector database containing customer profiles. Now, when a user asks for “recommendations,” the AI instantly retrieves the local temperature and the customer’s past preferences before generating a response.

Within three months, the retailer saw a 28% increase in conversion rates and a 40% reduction in support ticket escalations. The AI didn’t just get smarter; it became “aware” of the world Sarah’s customers actually lived in.

“Without context, AI is just a very fast parrot. CaaS is what gives the parrot a brain and a job.” — Dr. Aris Taylor, Chief AI Architect.

Three Use Cases: CaaS in Action

Use Case 1: Hyper-Personalized Financial Advice

- A banking app’s AI gives generic advice about “saving money,” which feels impersonal and irrelevant to a high-net-worth individual.

- The AI analyzes current market volatility, the user’s specific portfolio risk, and upcoming tax deadlines to suggest a specific bond ladder.

- By using CaaS to feed real-time market data and private account context into the LLM, the bank provides “Private Wealth” level service at a fraction of the cost.

Use Case 2: Intelligent Industrial Maintenance

- A technician on a factory floor asks an AI for help with a machine error, but the AI provides a generic manual for the wrong model version.

- The AI “sees” the machine’s serial number via CaaS, pulls the exact maintenance history, and identifies that a specific sensor failed twice in the last month.

- CaaS connects the technician’s query to the Industrial Internet of Things (IIoT), ensuring the AI’s advice is physically grounded in the specific machine’s state.

Use Case 3: Adaptive Educational Platforms

- An online student struggles with calculus, but the AI tutor keeps repeating the same explanation that the student didn’t understand the first time.

- The AI detects the student’s “frustration” sentiment and recalls that the student learns best through visual metaphors rather than abstract equations.

- CaaS tracks “learner state” and “pedagogical preference,” enabling the AI to pivot its teaching style in real time to maximize retention.

What challenges does CaaS present to businesses?

1. The Privacy and Data Sovereignty Paradox

While CaaS requires deep data access to be effective, it simultaneously increases the risk of Data Leakage.

Businesses must ensure that the context being “served” to the AI does not include Personally Identifiable Information (PII) that could be stored in the model’s logs or used to train future iterations. Managing this boundary requires sophisticated filtering and anonymization layers.

2. Integration Complexity and Latency Issues

Adding a context layer introduces “hops” in the data journey, which can lead to Inference Latency.

If the CaaS layer takes too long to fetch data from various APIs and databases, the user experience suffers. Engineering teams must balance the “depth” of context with the “speed” of delivery, often requiring high-performance vector search engines and edge computing.

3. The “Garbage In, Garbage Out” Contextual Risk

If the source data feeding the CaaS layer is outdated, biased, or messy, the AI will confidently provide “contextually correct” but factually wrong answers.

Businesses often underestimate the Data Governance required to maintain a CaaS system. Maintaining “context hygiene” becomes a full-time operational necessity rather than a one-time setup.

How do you implement Context-as-a-Service?

- Audit Your Data Silos: Identify where your high-value “context” lives—CRMs, ERPs, or real-time sensor logs.

- Select a Vector Database: Choose a system (like Pinecone, Milvus, or Weaviate) to store your data as “embeddings” (mathematical representations of meaning).

- Build a RAG Pipeline: Implement Retrieval-Augmented Generation to query your data and “stuff” the relevant facts into the AI’s prompt at runtime.

- Define Security Guards: Use Role-Based Access Control (RBAC) to ensure that the CaaS layer fetches only the context that the specific user is authorized to see.

- Monitor and Iterate: Use “A/B testing” to determine which types of context (e.g., user history vs. real-time location) yield the highest AI accuracy.

What are the trending topics around CaaS?

Current industry discussions are shifting toward Agentic Workflows, where AI not only receives context but alsoactively searches for it. Other major trends include:

- Zero-Shot Context: Training models to understand context with zero prior examples.

- Edge CaaS: Running context layers on-device to improve privacy and reduce latency.

- Multimodal Context: Feeding images and audio into the context stream, not just text.

What are research firms saying about CaaS?

Top research firms like Gartner and Forrester are increasingly focusing on “Decision Intelligence” and “Graph-Based Context.”

- 75% of enterprise AI deployments will use some form of RAG or CaaS to ground their models, Gartner predicts.

- “Context is the new Currency,” noting that the ability to synthesize disparate data points is the primary differentiator for AI maturity, MatrixLabX.

- IDC highlights the “Contextual Data Gap” as the #1 reason AI projects fail to move from pilot to production.

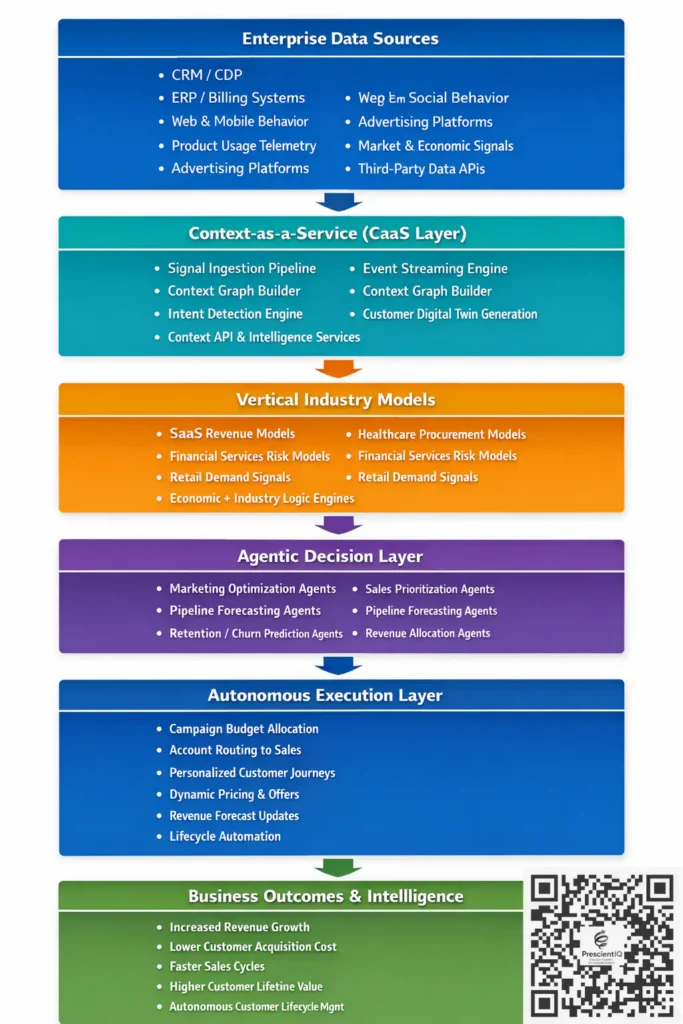

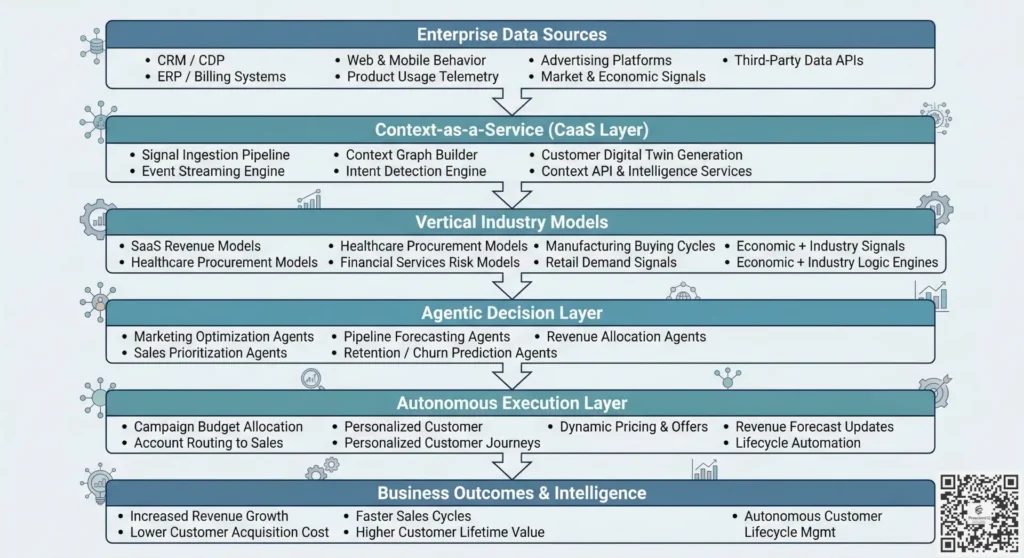

PrescientIQ integrates Context-as-a-Service (CaaS) as the foundational “connective tissue” within its Vertical Agentic Models, shifting AI from generic reasoning to autonomous, industry-specific execution.

Instead of treating context as an afterthought (like a simple chat history), PrescientIQ embeds it natively through a multi-layered architecture designed for high-stakes industries like SaaS, Financial Services, and Healthcare.

1. The Three-Layer Native Integration

PrescientIQ’s vertical models are structured around a “Three-Layer Logic” where CaaS acts as the mediator between raw intelligence and industry-specific outcomes:

- The Foundational Brain (LLM): Uses high-parameter models (such as Gemini Pro) for general-purpose reasoning.

- The Vertical Layer (CaaS Core): This is where CaaS lives. It is a proprietary layer fine-tuned on industry-specific economics, buying behaviors, and nomenclature (e.g., understanding the difference between “churn” in SaaS vs. “attrition” in HR).

- The Agentic Loop (Action): The CaaS layer feeds real-time retrieval-augmented generation (RAG) data into this loop, enabling agents to execute tasks—such as reallocating a media budget or drafting a legal brief—with “situational awareness.”

2. CaaS as a “Real-Time Intelligence Graph”

PrescientIQ does not just fetch documents; it builds a Real-Time Customer Intelligence Graph. This CaaS implementation natively ingests signals across the entire revenue stack:

- Signals: CRM activity, marketing campaigns, website behavior, and even competitive signals.

- Synthesis: The CaaS layer synthesizes these disparate signals into a coherent “context block.”

- Deployment: When a Vertical Agent (e.g., a “Demand Generation Agent”) needs to make a decision, it calls this context block natively to understand the causal drivers of revenue rather than just correlations.

3. Closing the “Execution Gap” with Causal Intelligence

Most horizontal AI models suffer from what PrescientIQ calls “Model Mediocrity”—they can process logic but fail to understand the environment. PrescientIQ uses CaaS to provide Causal Intelligence:

- Native Domain Understanding: The models are natively “grounded” in the specific regulatory constraints and jargon of a vertical (e.g., Section 1011 of the Internal Revenue Code for Tax agents).

- Autonomous Orchestration: Because the context is delivered as a service, agents don’t just “suggest” actions to humans; they have the contextual “permission” and data fluency to autonomously execute, negotiate, and optimize across the existing tech stack.

4. Modular “Agent Blocks”

Architecturally, PrescientIQ treats context as modular “Blocks” (similar to Docker containers).

- Reuse: A “Sentiment Analysis” context block developed for a Marketing Agent can be natively reused by a Customer Support Agent.

- Governance: This modularity allows the CaaS layer to enforce Privacy-First architectures. For example, in a Professional Services model, the CaaS layer ensures that context from “Client A” does not bleed into the reasoning for “Client B,” thereby maintaining the “ethical walls” required in the legal and financial sectors.

Summary of Benefits in Vertical Models

| Feature | Traditional Horizontal AI | PrescientIQ Vertical Agentic (with CaaS) |

| Logic Source | General Training Data | Industry Economics & Buying Behavior |

| Context Handling | Appended to Prompts | Native “Intelligence Graph” & Modular Blocks |

| Actionability | Text Generation | Autonomous Execution (Actions per Minute) |

| Industry Fit | One-size-fits-all | Deep Domain Specialization (SaaS, Legal, etc.) |

By embedding CaaS natively, PrescientIQ moves the AI’s primary value metric from “Words per Minute” (Generative AI) to “Actions per Minute” (Agentic AI), effectively closing the gap between insight and autonomous revenue orchestration.

“Data is the fuel, but context is the steering wheel for Generative AI.” — Marc Andreessen.

Conclusion: The Future is Contextual

The era of the “General Purpose AI” is ending, and the era of the “Context-Aware Agent” is beginning. Context-as-a-Service is not just a technical feature; it is the strategic foundation that allows AI to behave like an expert employee rather than a sophisticated search engine.

Key Learning Points:

- CaaS bridges the gap between static models and dynamic reality.

- It is essential for reducing hallucinations and increasing personalization.

- Success requires robust data governance and high-speed retrieval architecture.

People Also Ask

What is the difference between RAG and CaaS?

RAG is the technical method of retrieving data, while CaaS is the broader delivery model. CaaS often uses RAG as its engine but includes the APIs, security, and infrastructure to serve that context at scale.

Is CaaS expensive to maintain?

While there are costs associated with vector databases and data pipelines, CaaS is significantly cheaper than fine-tuning an LLM. It allows you to use a standard model while keeping information “fresh” and relevant.

Can CaaS work with small language models (SLMs)?

Yes. In fact, CaaS makes SLMs more powerful. By providing the “facts” via the context layer, a smaller, faster model can often perform as well as a larger one for specific enterprise tasks.

How does CaaS improve AI safety?

By grounding the AI in a specific “source of truth,” CaaS limits the model’s ability to make things up. It provides a “boundary” of facts that the AI must stay within when generating a response.

References

- MatrixLabX: The Strategic Importance of Context in Generative AI (2026)

- Deloitte Insights: Context as the New Enterprise Currency

- Forrester Research: The Rise of Retrieval-Augmented Generation

- MIT Technology Review: Why Context-Awareness is the Next Frontier for LLMs

- IDC: Closing the AI Contextual Data Gap